May 3, 2023

Posted by Jay Livingston

A friend of mine here in New York was the victim of a property crime, a larceny. The bad guys had broken into his car and taken whatever wasn’t locked down, mostly books as I recall (this happened a long time ago). He went to the local precinct to report it. Eventually the desk sergeant acknowledged his presence. “Somebody broke into my car and took all my stuff.”

“So what do you want me to do about it?” said the sergeant.

The officer’s response is understandable and quite reasonable. There’s no way the thief could be caught or the property recovered. Besides, this type of crime happened frequently. To fill out the paperwork or do anything would just be a waste of police time. My friend knew all this, but he was still not happy about the way the cops treated his victimization.

I remembered this anecdote when I saw some data from Portland showing low levels of satisfaction with a crime reporting system there. It also reminded me of the previous post about satisfaction with responses to medical questions. When people seek immediate medical advice online, they are more satisfied with the responses of a non-human (ChatGPT) than with those of a doctor. Doctors were five times more likely to get low ratings for both the quality of the information and the empathy conveyed. Three-fourths of their responses were rated low on empathy.

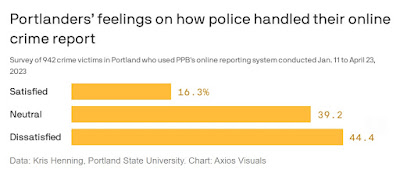

Something similar could be happening when people are victims of crime. In Portland, as in many cities, victims of non-violent crimes can use the online reporting system rather than calling the cops. Most people find the system easy to use, and it frees police resources for other matters, but so far it’s not getting high marks. Only 16% of those who used it said they were “Satisfied” and nearly three times that many said they were “Dissatisfied.”

Could ChatGPT help? As with medical reporting, the crucial factor is whether the police seem to care about the case. People who received a call or email from the police in response to their online report were twice as likely to be satisfied, even though the callback sometimes came weeks after the victim had filed the report and even though many of the victims or property crime merely want a case number for insurance purposes.

ChatGPT or some similar program could send this kind of email and respond to questions the victim might have. I’m not sure what ChatGPT’s initial message would sound like, but it wouldn’t be, “So what do you want me to do about it?” Putting ChatGPT on the case wouldn’t have any effect on the crime rate or the clearance rate, but it might make a difference in how people thought about their local police.